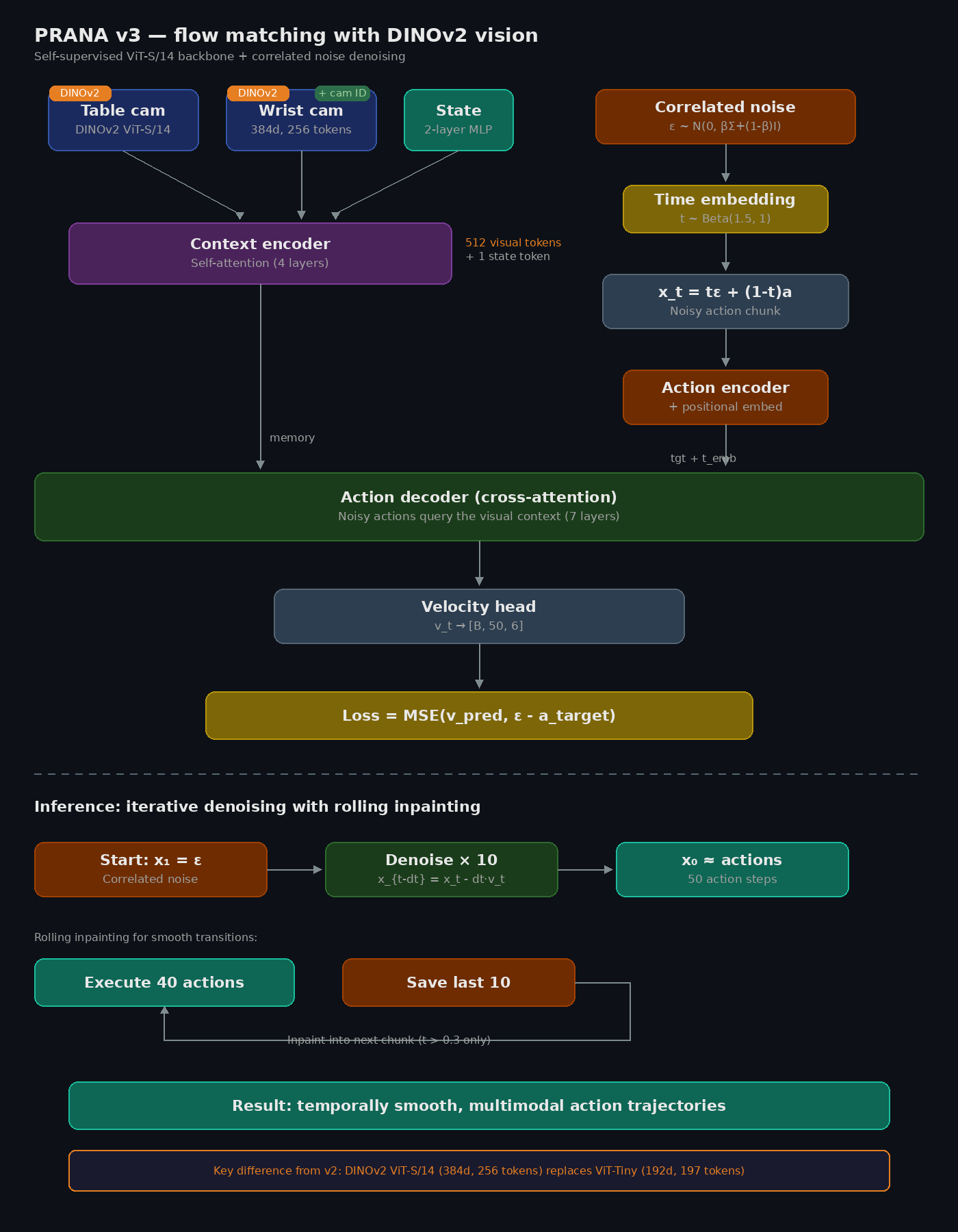

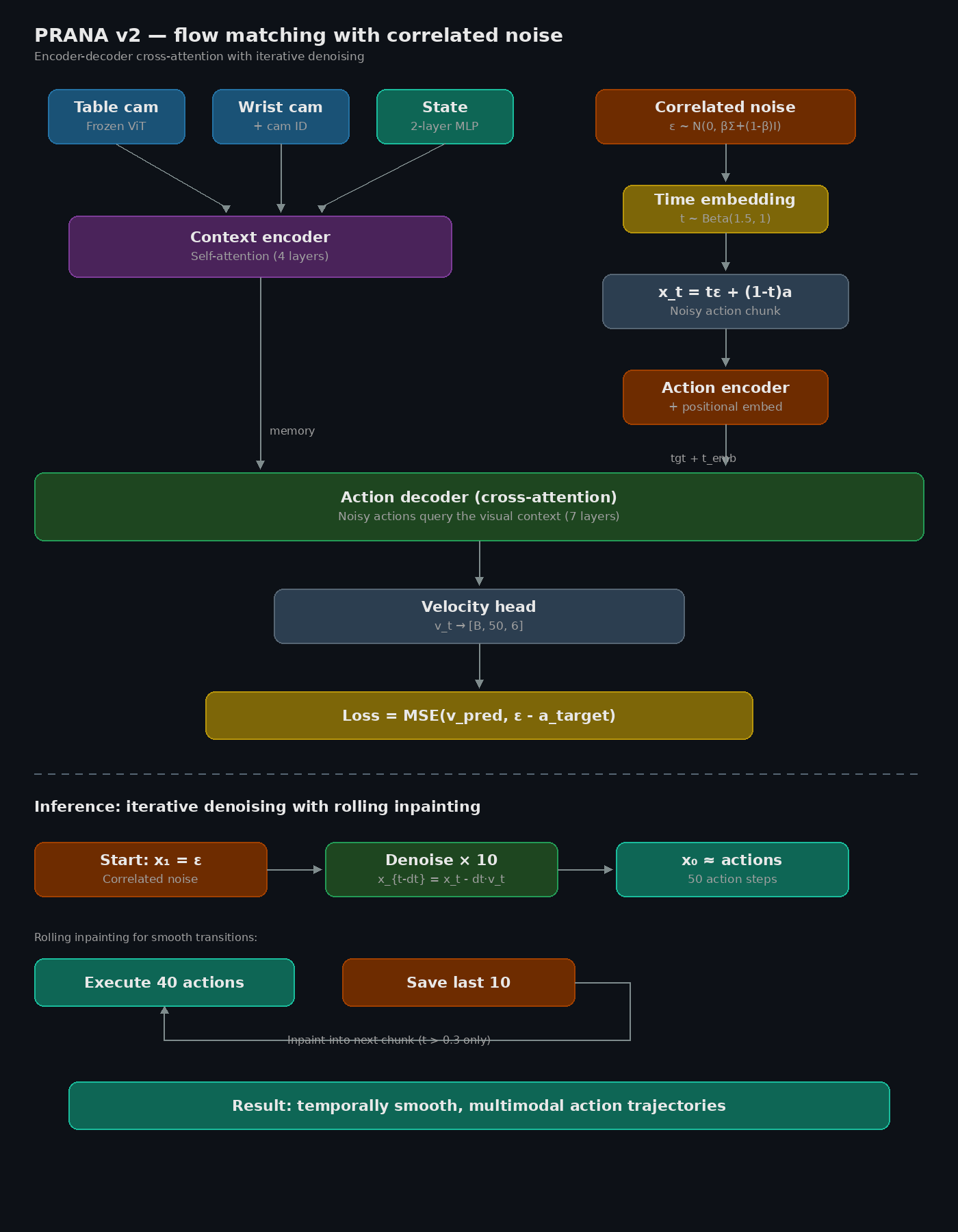

Iterative denoising with

correlated noise

Instead of predicting one average trajectory, the model starts from noise shaped like real robot motions and iteratively refines it into precise actions. Cross-attention so the decoder actually looks at the scene before deciding what to do.

- Frozen ViT-Tiny with camera-ID embeddings

- 4-layer context encoder (self-attention over vision + state)

- 7-layer action decoder (cross-attention to context)

- Flow matching: 10 Euler denoising steps at inference

- Correlated noise from Cholesky decomposition of action covariance

- Rolling inpainting: execute 40, save 10 for smooth transitions

- ~11M trainable params, 100K training steps

- 22ms per chunk inference on RTX 5060